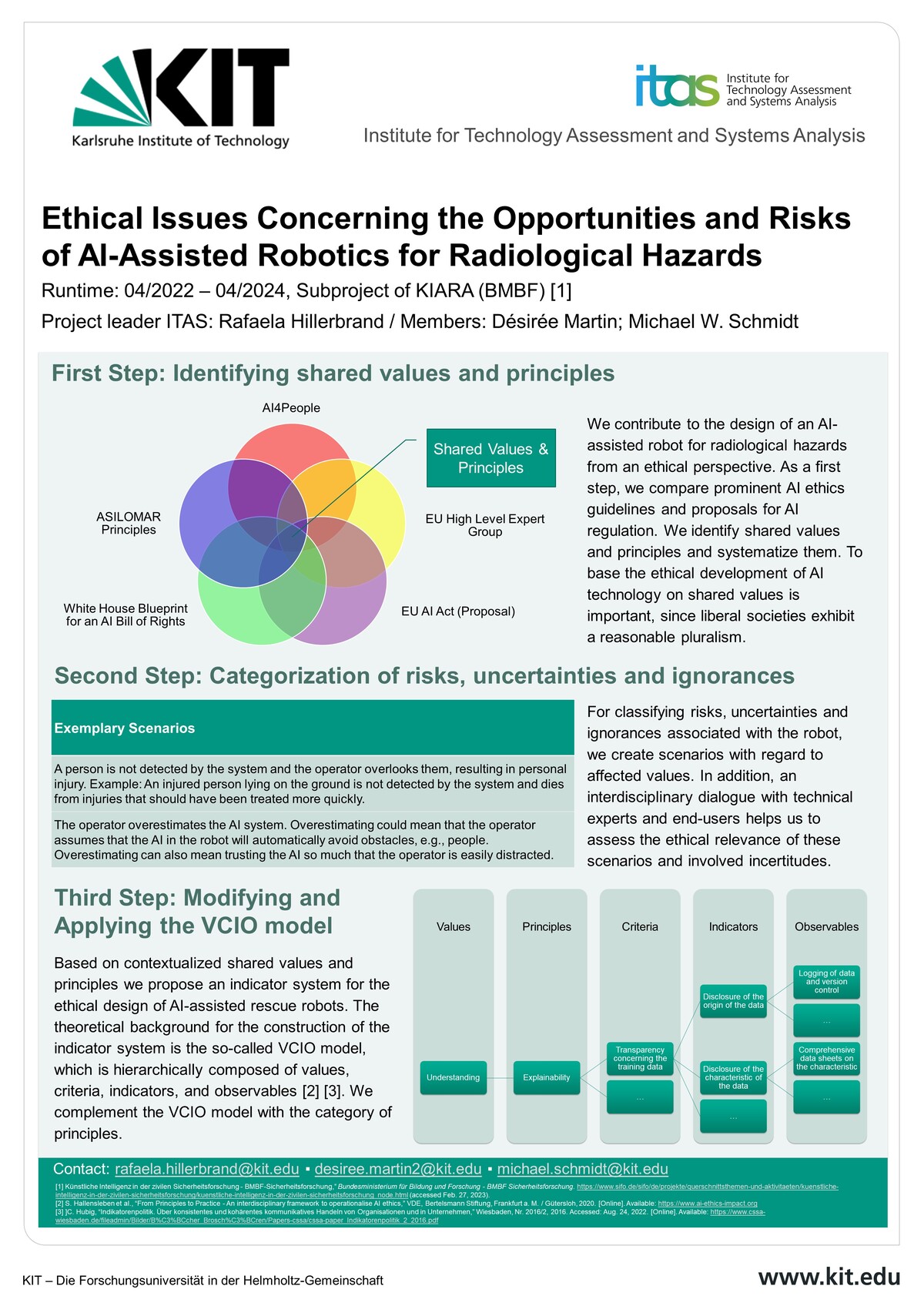

Joint project KIARA – AI assistance for robot-assisted reconnaissance and defense against acute radiological hazards (KIARA) - subproject: Ethical Issues Concerning the Opportunities and Risks of AI-Assisted Robotics for Radiological Hazards

- Project team:

Hillerbrand, Rafaela (Project leader); Désirée Martin; Michael W. Schmidt

- Funding:

Federal Ministry of Education and Research (BMBF)

- Start date:

2022

- End date:

2024

- Project partners:

Deutsches Rettungsrobotik-Zentrum e. V. (Dortmund), Energy Robotics GmbH (Darmstadt), Telerob Gesellschaft für Fernhantierungstechnik mbH (Ostfildern), Technical University of Darmstadt

- Research group:

Philosophy of Engineering, Technology Assessment, and Science

Project description

In acute hazard situations, such as in connection with the release of radioactive substances, emergency forces do not have direct access to the hazard site due to the high health risks. Remote-controlled robots can be used for situational awareness in such situations. In KIARA, modular AI systems are being developed and evaluated for use on mobile robot systems. In this context, artificial intelligence provides targeted support for the operation of the emergency forces - both in the rapid reconnaissance of acute hazard situations and in the implementation of initial measures to defuse the situation. Reconnaissance and security measures can be carried out faster, more effectively and with lower risks for the emergency forces. The capabilities of the AI systems improve successively through training and self-optimization. New training methods are being developed for user training.

In the project, representatives of science, industry (SME) and a non-profit organization are working closely with authorities and end users on research and practical development.

In doing so, ITAS is helping to shape the design and deployment of AI-assisted robotic systems by means of continuous, prospective techno-ethical (accompanying) research. Ethical questions of rescue robotics with AI are a very new and little worked on field so far. The accompanying ethical research thus extends basic research of AI and robot ethics and its application beyond that also innovatively to a field that has not been worked on so far.

Prospective and formative technology ethics aims at the acceptability of technical developments and identifies corresponding problem areas already during their development, for which solutions are worked out interdisciplinary.

The aim is (1) to develop regulations and indicator systems for the design and application of AI assistance for robot-assisted reconnaissance and defense against acute radiological hazard situations and (2) to understand the limits of the same.

The linking of ethical aspects of autonomous systems with explicit questions of how to deal with risks, uncertainties, and insecurities is innovative in this regard.

For this purpose, not only the ethical specifics, such as the potential dangers and opportunities of using AI assistance for robot-assisted reconnaissance and defense against acute radiological hazard situations, are investigated, but also their specific epistemic characteristics, namely the inherent uncertainties, especially in data generation, acquisition, and processing, which may lead to misjudgments by the AI system and/or humans.

In addition to the interface between ethics and technology, the interface between ethics and law is also of central importance here. The subproject addresses the extent to which ethics and law agree or provide different assessments, possibly conflict, but also complement each other.

On the one hand, there is a reference to the concrete topic of autonomous systems for reconnaissance and defense against radiological danger situations, on the other hand, there is a reference to the handling of risks, uncertainties and insecurities, which are to be illuminated terminologically from an ethical and legal point of view.

By the end of the project, ITAS will provide various publications and a blueprint for the regulation of AI-assisted rescue systems and finally an understanding of what ethical aspects can be quantified and meaningfully mapped in indicators and which cannot and should therefore be addressed procedurally. This blueprint can also be used for other high-risk AI-assisted robotic applications.

Contact

Karlsruhe Institute of Technology (KIT)

Institute for Technology Assessment and Systems Analysis (ITAS)

P.O. Box 3640

76021 Karlsruhe

Germany

Tel.: +49 721 608-26041

E-mail