Deepfakes: Approaching a complex phenomenon

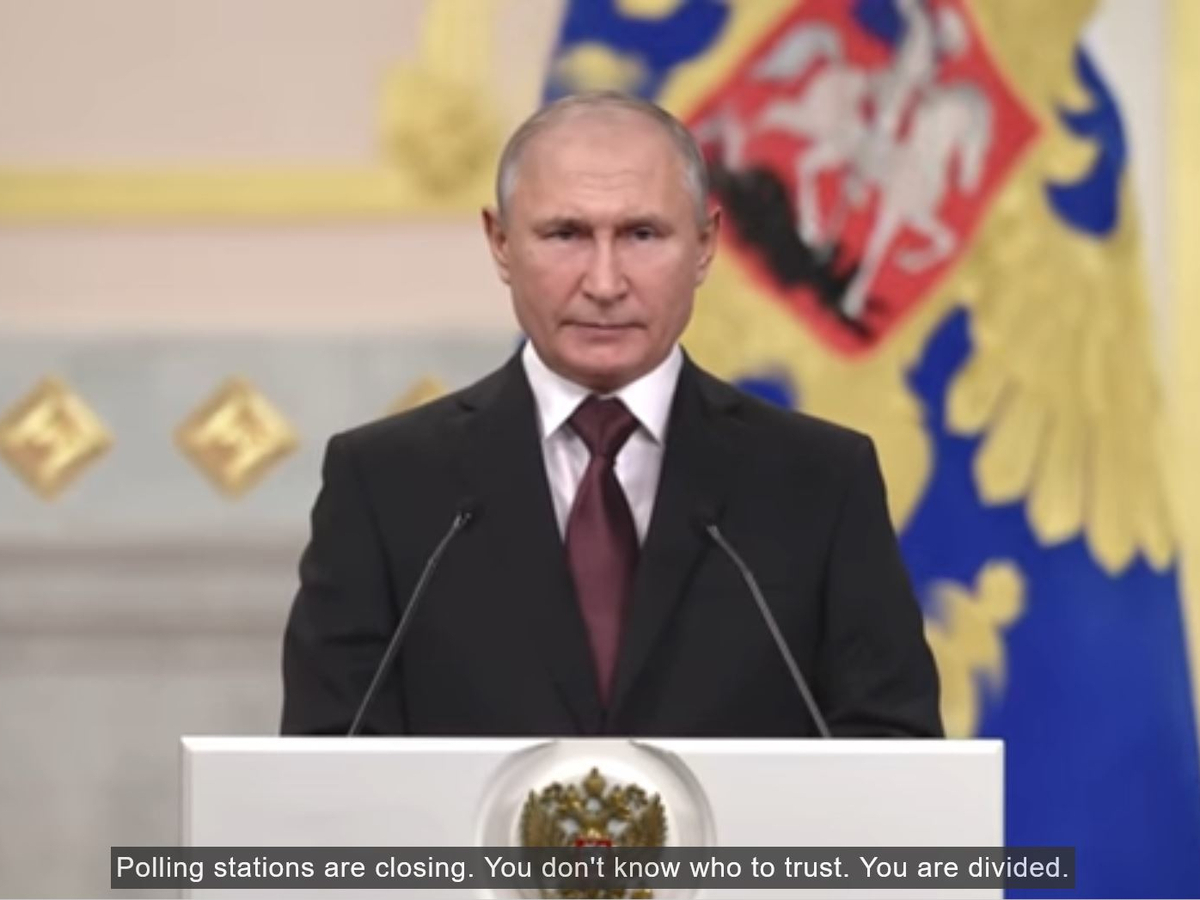

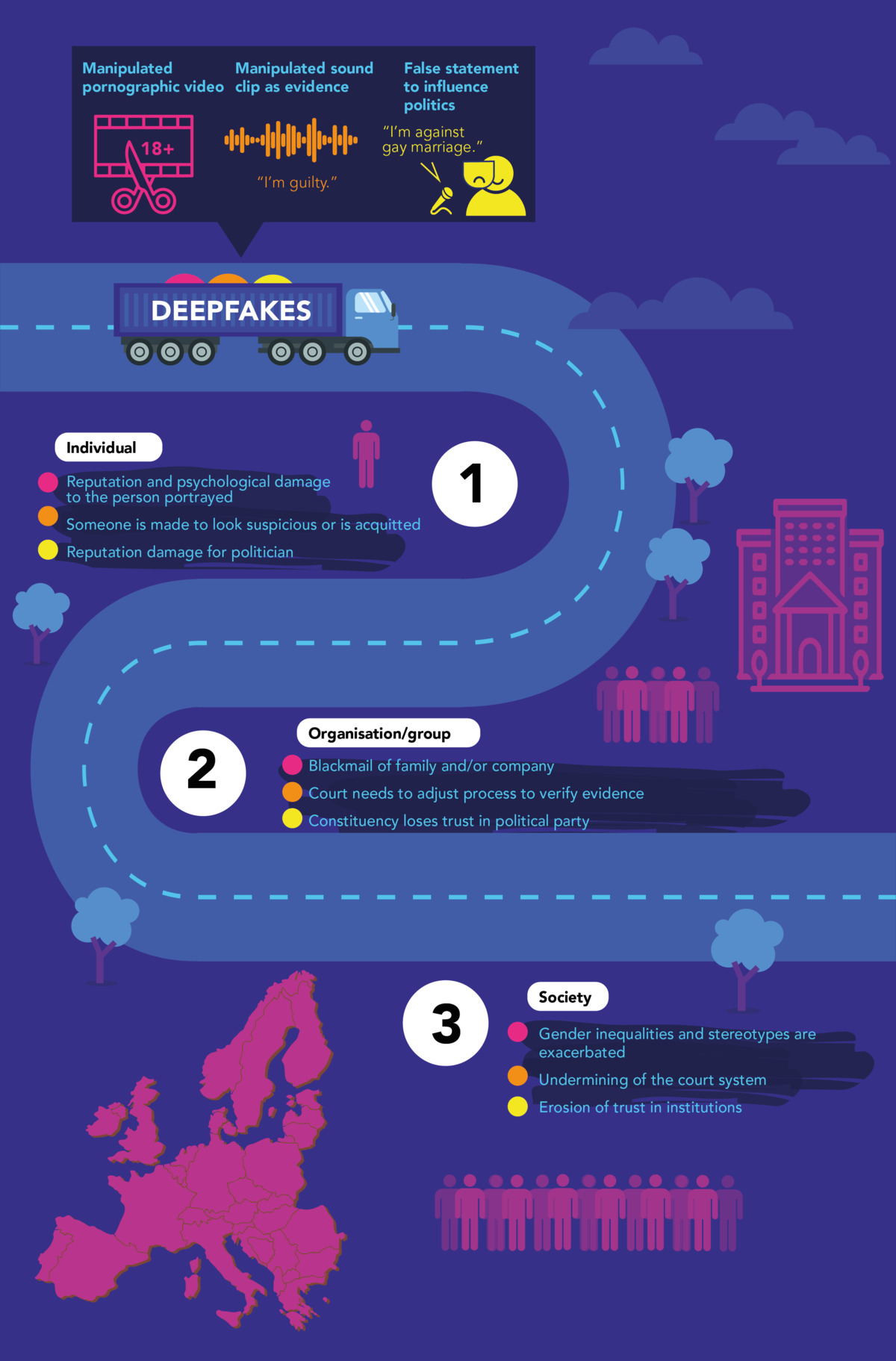

Deepfakes are photos, audios, or videos that look realistic but are manipulated by AI technologies and mostly generated autonomously. This technology opens up new possibilities for art, medicine, and education – but also involves considerable risks. “Deepfakes can be used to spread fake news and disinformation or to discredit individuals,” explains Jutta Jahnel, who investigates the impacts of the technology at ITAS. “They are also a serious threat for science and democracy since these systems depend particularly on a reliable evidence base,” adds the expert who specializes in technology assessment of learning systems.

Interdisciplinary pilot study

Against this background, a project led by ITAS now wants to consider the perspectives of different disciplines for a responsible use of new technical possibilities. In addition to technology assessment, also computer science, communication science, law, and qualitative social research at KIT are involved. Across these disciplines, researchers want to identify the societal implications of deepfakes and disinformation and identify gaps in existing regulatory proposals. In doing so, they do not only consider the interests and needs of societal actors from politics, science, and journalism, but also those of the citizens. A planned pilot study will focus in particular on the users.

Deepfake research for the European Parliament

The project team can build on the results of a research project for the European Parliament’s Panel for the Future of Science and Technology (STOA). In this project, ITAS researchers have investigated the regulation of deepfakes in the EU in cooperation with colleagues from the Netherlands, the Czech Republic, and Germany (Fraunhofer ISI). Their final report will be officially presentes in mid-October. Among other things, this report recommends not only regulating the production of deepfakes, but also focusing on the circumstances of their social dissemination and reception. (20.10.21)

Further links and documents:

- Project page Interdisciplinary approaches to deepfakes

- STOA report Tackling deepfakes in European policy on dealing with deepfakes in the new AI legal framework

- KIT Press Release Deepfakes: Manipulationen als Gefahr für die Demokratie (Deepfakes: Manipulations as a Danger for Democracy)